Pololu Blog »

Posts tagged “community projects”

You are currently viewing a selection of posts from the Pololu Blog. You can also view all the posts.

Popular tags: community projects new products raspberry pi arduino more…

Pololu laser-cut parts used by middle school team at the FIRST LEGO League championships

Last week, 7th-grade robotics team ‘Lightning Strikes Twice!’ (LS2!) from Aspen Middle School in Colorado joined 160 teams from 66 countries to compete in the FIRST LEGO League Challenge World Festival held in Houston, Texas. It was their first time qualifying after winning multiple first-place awards at an event in Fort Collins, Colorado last November, and second place at the Colorado State Championship in December.

LS2! designed an autonomous turtle robot to conduct coral reef research, which uses LEGO electronics and pieces to control the flipper mechanism. Pololu supported the team with laser-cut plywood pieces for mounting the paddles and acrylic pieces for the tail and watertight body with gasketed openings.

|

Turtle robot SHELTN2 submerged in a swimming pool. |

|---|

Working with Pololu was great. The parts arrived quickly and were very well packed. I suggested the students leave 3 mm of clearance around the LEGO structure to account for tolerance in the laser cutting and assembly but the parts were very accurate so we could have made it tighter. We used a combination of Weld-On 4 and 16 to glue the acrylic. The most challenging part was designing for the rubber shift boots we used to seal the joints while allowing movement. SHELTN’s total weight was approximately 7 kg plus 6 kg of ballast to achieve neutral buoyancy.

- William Gilmore, Mentor, Lightning Strikes Twice!

You can read more about the FIRST Championship in Houston in this FIRST press release, and visit the FIRST LEGO League blog for a full list of challenge and division award recipients.

We’re proud that parts from our Custom Laser Cutting Service helped LS2! bring their design to life and excel through their competition. Congrats to Lightning Strikes Twice! on all of their achievements!

Customer video: Replace your POWER SWITCH on your Arduino or ESP32 projects without reinventing the wheel

Electrical engineer and content creator Dave Crabbe recently released a video demonstrating his use of our pushbutton power switch to control power to his ESP32 remote-controlled toy dump truck. He has an open-source, custom-designed PCB for the electronics, and the Gerber files and schematic are linked from the video description.

If you like Dave’s content, be sure to subscribe to his YouTube channel so you don’t miss his latest videos.

Moving sculpture Kinetic Little Rain

This hypnotic video from customer Alain Haerri shows Kinetic Little Rain, a moving sculpture that was inspired by the sculpture Kinetic Rain in the Changi Airport in Singapore. Alain’s sculpture features 100 blown glass drops that are moved by stepper motors. Each motor is driven by its own Tic T500 Stepper Motor Controller and the whole setup is controlled by an Arduino Mega 2560.

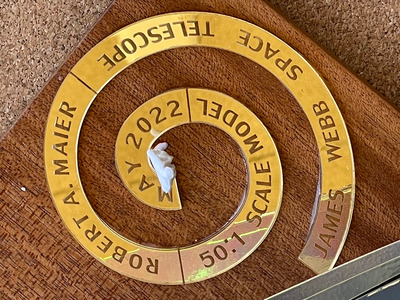

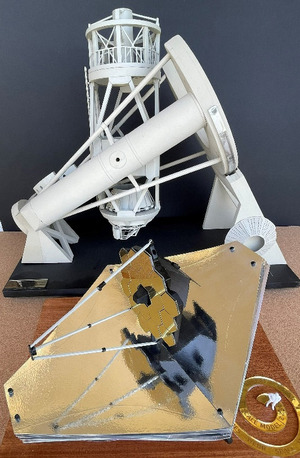

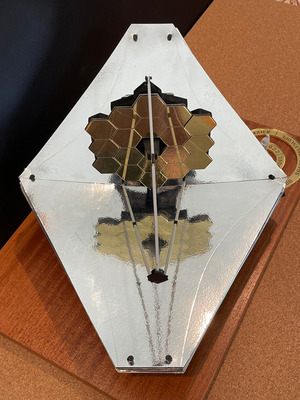

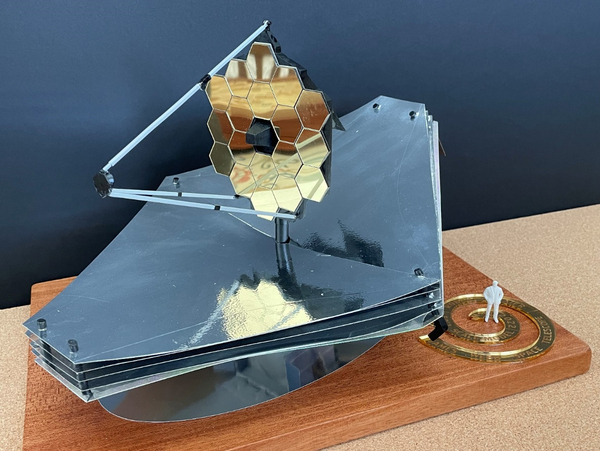

Laser cutting part of a 50:1 model of the James Webb Space Telescope

|

50:1 scale model of the James Web Space Telescope model with laser-cut and etched gold-mirror acrylic and gold-mirror styrene parts. |

|---|

Retired aerospace engineer Robert Maier shared with us this awesome 50:1 scale model of the James Webb Space Telescope (JWST) he made with his brother Mark and a little help from our custom laser cutting service. We cut the JWST’s main mirrors for him out of 1.5 mm gold mirrored styrene sheets from Midwest Products, and the hexagon patterns were laser etched onto the surface. He also had us laser cut various silicone bands to hold the moving pieces of the structure as the model folds/unfolds.

|

|

We more commonly work with 3 mm mirrored acrylic, but the model’s mirror required something thinner, and the more expensive styrene was perfect for the job. For comparison, the spiral label sitting beneath the figurine’s feet was cut from gold mirror acrylic.

|

|

Mark uses the model in the Astronomy 101 classes he teaches at San Jacinto College in Southern California. He recently wrote an article about the model, which is published in the April 2023 issue of Sky and Telescope magazine (it’s on page six). Additional photos of the model are included below, and even if you’re not a subscriber to the magazine, you can preview the article online.

Do you have a fun idea in mind that can benefit from laser-cut parts? Submit a quote request or contact us to discuss how we can help.

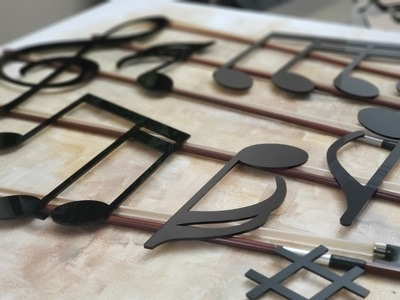

"Serenade"; 3D Mixed-Media Artwork by Tammy Carmona

|

Artist Tammy Carmona recently used our laser cutting service to help create her 3D mixed-media artwork, “Serenade”, pictured above. Carmona writes about the piece:

This mixed-media composition takes classical elements of music, and combines them with art in a decadent, delicious “feast for the eyes”.

The piano-glossy notes, the authentic violin bows and an actual violin, the laser-cut butterflies, the preserved roses – each element brings a different sensation and a different meaning.

Themes of rebirth and remembrance permeate this piece. The old violin is reborn with bursts of flowers, the old bows have found a new life supporting musical notes, the flowers are real preserved roses.

The music notes were laser cut out of black cast acrylic, which we stock regularly. The title sign for the piece, pictured below, was cut from the same material and raster engraved. A nice feature of the cast acrylic used in this piece is that it turns frosty white when engraved, which provides high contrast engravings.

For a more detailed description of Carmona’s piece and additional pictures, visit her website here. More information about our laser cutting service can be found here.

|

|

|

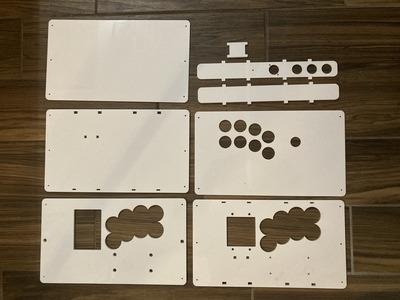

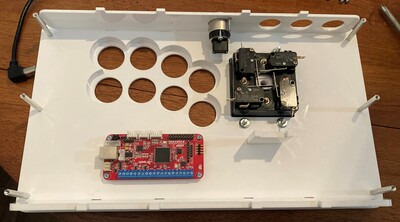

Curtis's laser-cut arcade stick case

Hello, I’m Curtis, an engineering intern at Pololu. I’m studying mechanical engineering at University of California, Irvine. I’ve been playing a lot of Tekken during the pandemic. In fighting games like Tekken a lot of people use arcade sticks to play. So, I wanted to build my own.

I built an arcade joystick case using acrylic parts (3mm and 6mm thickness) that I made with our Custom Laser Cutting Service as well as various M-F standoffs, F-F standoffs and screws and nuts.

I designed the case myself in Solidworks. I decided on a length and width of 8" × 14" because that makes it large enough to be comfortable, while being small enough to fit in a backpack and carry around. The positioning of the buttons and joystick is based on Hori arcade sticks, with some modification to fit my hands. The difficult part was figuring out how to mount all the components. I ended up layering the acrylic pieces to form the top and bottom plates. This let me mount components in between the layers, which hid screws and made the case look better. I was also able to cut holes to position vertical supports, like the front and back walls, to increase the case’s rigidity.

|

It’s designed to fit:

- 8 × 30mm and 3 × 24mm Sanwa buttons

- Neutrik USB type A to B pass-through

- Brook Wireless Fighting Board

- IST Alpha 49s Joystick.

PCBs from Brook are popular for arcade sticks. They have low latency and are compatible with PC and consoles. Button and joystick choices are based on personal preference, similar to mechanical keyboard switches. Sanwa buttons are popular as well, and pretty standard in a lot of arcade cabinets, so I picked them because I’m used to them. I chose the IST joystick because the joystick tension is stiffer, which I prefer because it makes quick movements easier.

It can be a little tricky to put together. I didn’t realize that the joystick switches had tabs that extended beyond the sides of the joystick, so it couldn’t slide into the case. To get around this, the joystick just has to be taken apart and put back together inside the case.

|

Overall the case works really well. I was worried that the acrylic wouldn’t be stiff enough, but the case is rigid, and all the components fit.

You can download my CAD files (.DXF and .CDR) for the laser cut parts here (97k zip) to cut out the same case I designed, or as a starting point to design your own.

Video from content creator Curio Res: How to control a DC motor with encoder

Content creator Curio Res recently released a tutorial and accompanying video explaining how to control a DC motor with an encoder. The video and post cover how to set up a motor with encoder, a controller, and a motor driver and how to read encoder signals. They also address common questions we get from customers who want to add closed-loop feedback to their projects such as how to implement a PID algorithm to control the position of the motor shaft based on the encoder readings. The content is well explained, and the diagrams and motion graphics make everything easy to follow and understand.

The tutorial uses one of our 37D Metal Gearmotors and our TB67H420FTG Motor Driver Carrier. The tutorial also uses an Arduino Uno, but one of our A-Star 32U4 Primes could be used instead.

If you like Curio Res’s content, be sure to subscribe to their YouTube channel so you don’t miss their latest videos. We look forward to seeing more great tutorials from Curio Res!

3pi+ featured in community member's intro to robotics video series

Customer and forum user Brian Gormanly (known as bg305 on our forum) just released the first video in his new Arduino Lab Series: Introduction to Robotics: Building an Autonomous Mobile Robot. Brian writes, “Throughout this series we will be introducing topics on building and programming an autonomous mobile robot! You can follow along with each lab adding amazing new behaviors to your robot projects and learning the algorithms and tuning techniques that produce incredible robots!”

Brian is using our new 3pi+ 32U4 Robot in his videos and from the first video, it looks like the series will be a great introduction to robotics and the 3pi+! Subscribe to Brian’s channel Coding Coach so you can make sure to catch each video as it is released.

Starlite by Grant Grummer

Grant Grummer used our laser cutting service to create 6- and 8-point acrylic stars for his project, Starlite: A programmable star-shaped canvas for displaying light patterns.

Starlite uses a 3mm thick laser-cut piece of translucent white acrylic (#7328 white, also called “sign white”) for the front face. The LEDs mount onto a thinner (1.5mm thick) piece that has rectangular cutouts that allow the LEDs to connect to the controls, the main control board, and an UPduino daughter board.

You can read more about Starlite on Grant’s Make: Projects and GitHub pages.

If you are interested in making a similar light display, be sure to check out our selection of LED strips!

PULSE: A pendant to warn you when you touch your face

|

PULSE pendant by JPL. |

|---|

PULSE is a 3D-printed wearable device designed by JPL that vibrates when a person’s hand is nearing their face. It’s based around our 38 kHz IR Proximity Sensor, and was designed to be relatively easy to reproduce (it doesn’t require a microcontroller or programming, but you do need access to a 3D printer to make the case). The project is open-source hardware, with complete instructions, design files, and a full parts list available on GitHub.

These are the parts that can be purchased from Pololu:

- Pololu 38 kHz IR Proximity Sensor, Fixed Gain, High Brightness

- 10×2.0mm or 10×3.4mm shaftless vibration motor

- Mini Slide Switch: 3-Pin, SPDT, 0.3A

- Wire

Here’s a short demo of our intern Curtis using a PULSE pendant he made himself:

You can find more information about the PULSE pendant on the PULSE website.