Pololu Blog » Engage Your Brain »

Abstractions

We should consider the general concept of abstraction in robotics a bit before moving on to more specific topics. Abstraction comes up a lot in computer science and programming, so I think people in that field are exposed to it early and often. Just about any program will have at least some user-made abstractions in it, be it a data structure or a subroutine, so programmers tend to be aware that the abstractions are just whatever they choose to make them and that they are not necessarily statements of absolute fact. In other introductory engineering contexts, at least in my experience, there is less of an explicit acknowledgment of the abstractions being used. There’s all kinds of talk of models and approximations, and there are probably disclaimers at the beginning of the classes or texts that warn students of the limitations of the models. Perhaps those disclaimers come so early that by the time students learn the models, they have forgotten that they are just simplifications. It’s also possible that the people I am thinking of have not had any formal engineering education, but whatever their backgrounds, I have seen many customers thoroughly confused when an abstraction is violated.

|

Hobby servos are a popular abstraction, and they’re literally black boxes! |

|---|

An abstraction is a generalization or simplification we make to deal with complexity. A typical example is the interface to a car: you can learn the basic controls and then apply that understanding to multiple cars without relearning how to drive each car separately and without even understanding the details of how the steering wheel is coupled to the wheels. In robotics, just about every component is an abstraction; we can use bumper switches, motors, batteries, wires, and integrated circuits without knowing or worrying about all the details of how they work. When we deal with complex systems that are made up of thousands of components that are in turn made up of thousands of components, we cannot keep track of every smallest component. Instead, we try to group and categorize the parts into manageable chunks that we expect to characterize with a higher-level description, so that we think of a gearbox instead of the individual gears and axles making it up, and we think of a microcontroller instead of the individual transistors it’s made of. The grouped entity is often called a “black box” since its internal workings are unknown, and we rely instead on descriptions of what the black box does, not how it does it.

We use another type of abstraction when we simplify components that we could characterize further if we needed to. For instance, we could determine the actual resistance of a piece of wire, but we usually know that whatever it is, it’s low enough not to worry about it. We therefore usually think in terms of ideal wires with zero resistance, even though we do not actually have such wires. Similarly, we might think of batteries as ideal voltage sources that give us a constant voltage no matter what current we draw from them. We make these simplifications because they make life easier and because we can usually get away with it.

|

But, sometimes we don’t get away with it. There are two kinds of confusion that arise from an abstraction’s betrayal: the infuriating bewilderment before discovering which assumption is wrong, when everything seems like it should work and yet the system doesn’t, when you start doubting every measurement you make and start questioning the intentions of friends and technical support staff trying to help you out, and the aimless despair after realizing that a fundamental pillar of your understanding of the world is shattered, when you wonder if anything you built before actually worked the way you thought it did. It might not be that dramatic; you might just be slightly curious about why your dog doesn’t run very fast when you sit on it, or you might just not know right away what to do with the fact that wires don’t have zero resistance.

Unless you are particularly small and your dog is particularly large, it should be obvious that sitting on it will slow it down. Of course, all kinds of things are obvious once you know them, but what can make electronics particularly difficult is that it’s so easy to violate some abstractions without knowing it. Even if you don’t imagine ahead of time that sitting on your dog will slow it down, it’s very easy to notice once it’s happening. For the most part, on the scale of small educational and hobby robots, we don’t really push materials to their mechanical limits, and when we do, we can just see with our eyes when parts start to flex or shavings build up at some friction point or hair gets caught in a gearbox. Failures are also usually slow enough that we can see them happen, and if a part snaps in two, we can later look at where it broke. I am not suggesting that difficult or subtle problems don’t exist with mechanical systems; it’s just that on the personal robotics scale, we are unlikely to encounter problems equivalent to wings falling off planes or bridges collapsing. For the most part, when you use a screw, you don’t have to worry about how much it can hold, and in the exceptional case where a screw is your weak point, it practically announces itself to you.

|

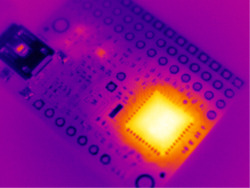

Which of the thousands of components in this “black box” is making it heat up? |

|---|

With electronics, on the other hand, we often are close to the physical limits of components without even knowing it. It’s easy to route substantial amounts of power through microscopic components that are so small we cannot really have an intuitive feel for what is reasonable, and when a component fails, it can instantly self-destruct with little warning and with no evidence of what failed (for those without extremely expensive equipment). It might seem a bit unfair to compare a screw to an integrated circuit, but for most of us, that is the inherent difference in scope between mechanical and electronic parts: if you get a mechanical assembly with thousands of components, you can probably take it apart down to the level of individual screws and gears; you probably cannot do that with an integrated circuit.

The difficulty with abstractions in electronics is not limited to the complexity of the smallest components: the general concepts also require more abstract thought because the basic phenomena involved are beyond our senses and often beyond our comprehension. We can see, feel, and hear physical movement, we can move ourselves, and we can move other objects. We can look at marvelously intricate mechanisms from clocks to piston engines, and we can build up our understanding starting with direct observation. That kind of direct observation just isn’t an option for a cell phone or even a garage door opener, and it has nothing to do with the size or quantity of the components; with a few exceptions like visible light, we just cannot sense the electrons and electromagnetic waves flying around us. These things are real and all around us, but we cannot tell if they are there or not. So we have to devise complicated instruments to give us some measurements of these phenomena, and we have to devise complicated models and analogies and abstractions just to try to comprehend them.

So, when working with electronics, we have little choice but to accept very complicated components as black boxes that we might very well be using close to all kinds of real, physical limits. The physical existence of the components also makes it unlike our abstract constructs on the programming side of robotics, where if something is wrong, we can at least keep running the program again and again without destroying real objects that might cost a lot of time and money to recreate after each failure.

|

Arduinos are another popular abstraction. |

|---|

The point of all this is not to say despair is justified or to cover my ass for the case where a product I sell you doesn’t work. Rather, my point is to raise your awareness of these abstractions and the inherent complexity involved in creating even a relatively simple robot. Anything others might do to try to simplify (or ignore!) the fundamental engineering problems is likely just creating another layer of abstraction, and as you advance past the intended scope of that framework, you will encounter the limits of the abstraction. Here are some tips for dealing with the inevitable breakdown of an abstraction you’re counting on:

- Check your premises – We’re talking about a situation where something you think should work does not. I often hear lamentations along the lines of, “I don’t know why this doesn’t work”, or, “there’s no reason this shouldn’t work”. Assuming everything is actually hooked up as you think it is, it might be time to start thinking, “why do I expect this to work?”. Which assumption is least grounded in reality? When you do find a discrepancy between your expectations and your results, don’t just assume it doesn’t matter or that your measurement is wrong. (“Yeah, that part doesn’t make sense, but it shouldn’t be causing the problem” is a good way to miss the problem).

- Simplify – Simplifying your system to the smallest instance that shows the problem is crucial to good troubleshooting and to communicating your problem to others whom you ask for help. The more subsystems you confirm are working as expected, the more you can narrow down the problem. Keep in mind that even when you get to a point where removing one part makes the problem go away, there might be further simplifications you can make and that the part that seems correlated to the problem might not actually be responsible for the problem. For instance, if you have a hundred parts and find that your problem goes away when you remove one, it would be even more useful if you can determine that you can remove another ninety parts and reduce your problem to one that shows up with ten parts in the system and does not show up with nine parts in the system.

- Don’t push the limits – Do you have several amps coursing through your breadboard or 127 devices connected to your USB port? The closer you are to various limits, the less margin for error you have.

- Get some test equipment (and use it!) – As I mentioned earlier, part of the difficulty with electronics is that we cannot directly perceive the phenomena we are working with. Therefore, it’s crucial to obtain the best test equipment you can afford or borrow. Often, a quick measurement will show which assumption is wrong. I have encountered many customers who have a meter and don’t check some basic voltages or students who have access to good test equipment but who don’t feel like going to the lab. Use your test equipment! Basic equipment you should have include a multimeter and an oscilloscope. And speaking of abstractions, you should have some appreciation of how your equipment works and how it might affect the very things you are trying to measure (that’s a topic for another post).

- Learn more theory – If your design should work in theory but doesn’t in practice, your theory probably needs work. I’ll move to more specific topics soon that I hope will help.

2 comments

Great Job!!